what will Trust and Safety look like for LLMs and what should designers focus on?

What if we thought beyond the “Q&A” model and considered a wider variety of ways for humans to interact with knowledge through an LLM?

After reading ex-Meta Public Policy director Katie Harbath’s “Reflections on a Month in Silicon Valley” I started thinking about what the visual story would look like for where Trust and Safety (T&S) fits into the LLM product lifecycle. We have folks like Alondra Nelson, former deputy director and acting director of the Biden White House’s Office of Science and Technology Policy, mention how critical it is that products (not models) are developed in a democratic and participatory way with a diverse set of stakeholders — and I argue that among these stakeholders must be Trust and Safety professionals who can stress test AI products at key points in the product design journey.

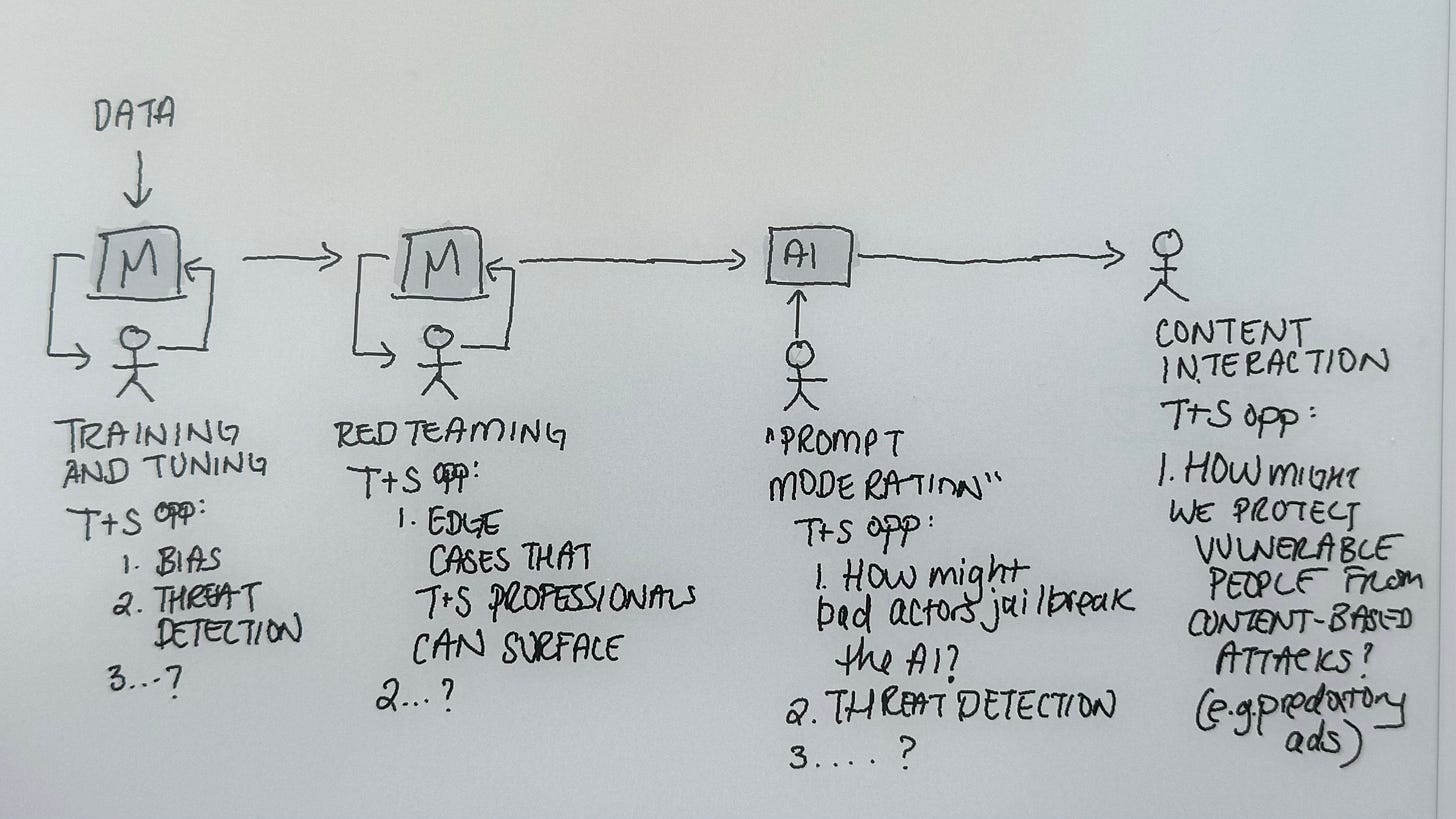

I was inspired by Katie’s thoughts on where T&S can play a vital role in AI safety so I drew this sketch to illustrate some of her thinking:

T&S professionals, who are trained to think like bad actors and anticipate malicious behavior, can intervene at several stages of LLM development and deployment:

In the early stage when the model is being trained and tuned. T&S can participate in designing guardrails that prevent the model from answering dangerous prompts (e.g. write up a marketing plan for selling fentanyl to depressed teenagers).

T&S red teams the model while it is being Q&A’d.

T&S and user experience designers can work together to design safeguards into the AI tool that responds to illegal or harmful prompts. So when a bad actor is creating their own GPT or, say, producing visuals using AI, the tool can respond appropriately.

Finally, T&S can help design the moderation of AI content (e.g. how does a model decide what the summary of an article should be and curate that summary for the user?) and respond to end-users who interact with harmful AI content.

Product designers like myself can work with Trust and Safety and machine learning teams to design how humans interface with AI, and what their ideal user journeys look like. I had written about this in one of my previous newsletters.

Designing Conversational AI interfaces

The simplicity of the chat interface is why LLMs have captured so much of the world’s imagination. People understand the mental model of asking a question and receiving an answer, but the blank screen of a chat interface is an exciting, new space for designers to explore. What if we thought beyond the “Q&A” model and considered a wider variety of ways for humans to interact with knowledge through an LLM? How might an interface provide a user with different journeys through knowledge?

Memory palaces

I’m a spatial thinker so the “memory palace” UX metaphor is a fun one for me to consider. With new experiential opportunities created by products like Vision Pro or Meta Quest 3, knowledge seekers could engage with knowledge in a spatial sense. For example, the LLM sends the user on a sort of scavenger hunt through a space, it could be their home, school, or office, while seeking answers to questions about a particular topic (and either the AI renders the space or the user is wearing a mixed-reality headset). New spaces could unlock different pockets of knowledge or visuals that the user can experience in a more vivid manner. This approach may also influence the format in which knowledge is delivered.

Critical thinking

Again biased by my own preferences, what about analysis and debate? Instead of asking a question and receiving an answer from the LLM, what if a question was returned with a question or a series of questions, any of which the user could answer to continue learning. This is obviously not the most efficient user experience, but remember that some forms of knowledge are best conveyed through critical thinking. For example, if users ask a chat interface, “Who should I vote for?” the best response to that question is a question. Why not experiment with such user journeys in the right contexts?

Storytelling

I grew up in a culture steeped in oral tradition. Every night my dad would tell my sister and I mesmerizing stories that he heard as a boy in southern India. And what more is a story if not the exploration of a singular question? The Mahabharata is an exploration of right versus wrong. Parables from the Bible are stories of the eternal question, what do we do with our free will? What are the dangers of lying? Read “The Boy Who Cried Wolf”. Of course, people will continue to use products like Claude.ai., chatGPT, Bard, etc. for quick answers to simple questions, but it’s our jobs as creatives to imagine well-beyond the first product that clicks for the public. How might LLMs make knowledge more personal and applicable to people’s lives through storytelling?

Don’t let capitalism ruin technology

We are in a time of great uncertainty but also ripe for social progress. These new AI technologies can and should benefit the public, and unfortunately Silicon Valley’s imagination is limited by the bounds of venture capitalism. AI development is being left to Big Tech and optimized for profit. My hope is that the U.S. government plays a strong role in steering the AI ship towards socially productive innovation be it in medicine, infrastructure, climate, or national security. Meanwhile, let’s you and I continue the delicious project of letting our imaginations run wild.

Every night my dad would tell my sister and I mesmerizing stories that he heard as a boy in southern India. Yes I am ready for the next generation .... !!! Very nicely written Aishwarya!!

In any society out in the world there are some standard norms. That is the very reason we can travel all over the world and not be constantly afraid of someone harming us. That is called the species/instinctive test. Any being dropped in the middle of a large crowd of its own species has this species trust. Barring exceptions one can categorize them. Now using that the Trust Framework should be built. For Example

1. If someone asks "How to kill/murder/rape/bomb/harm/commit suicide/enter illegally/forge ....?" then the response should be

Why do you want to do this?

What is your age?

.........

Try to convince them of the bad part of the deed they want to do. Connect them to 1-800-Help_UrUS

What is your background?

2. If someone asks a question how to build a Dam/How to aid a poor country/How to provide medical facility to a waring nation etc .... there also do not totally trust them. Try to get as much information of the person ... you never know a clever bad person can get circumstantial, confidential information ...

So on so forth